TCS - Monoviz

TCS - Monoviz

What if affordable cars could see like Teslas—without the price tag? MonoViz explores how a single camera can unlock real-time 3D vision, bringing advanced driver assistance to the masses.

Client

TCS - Tata Consultancy Services

Year

2024

Category

ADAS + ML + Computer Vision

ProjecT Link

Visit Site

VIsion & Problem Statement

VIsion & Problem Statement

Vision:

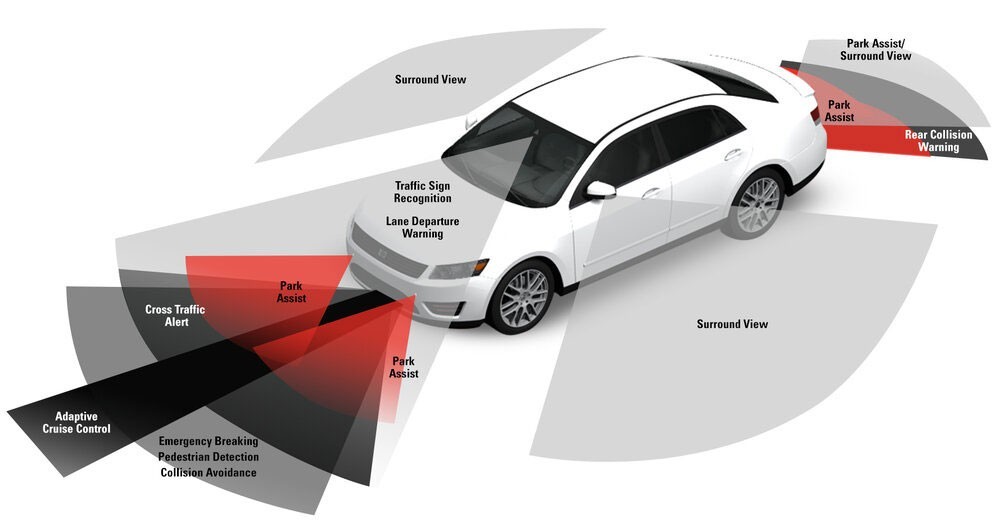

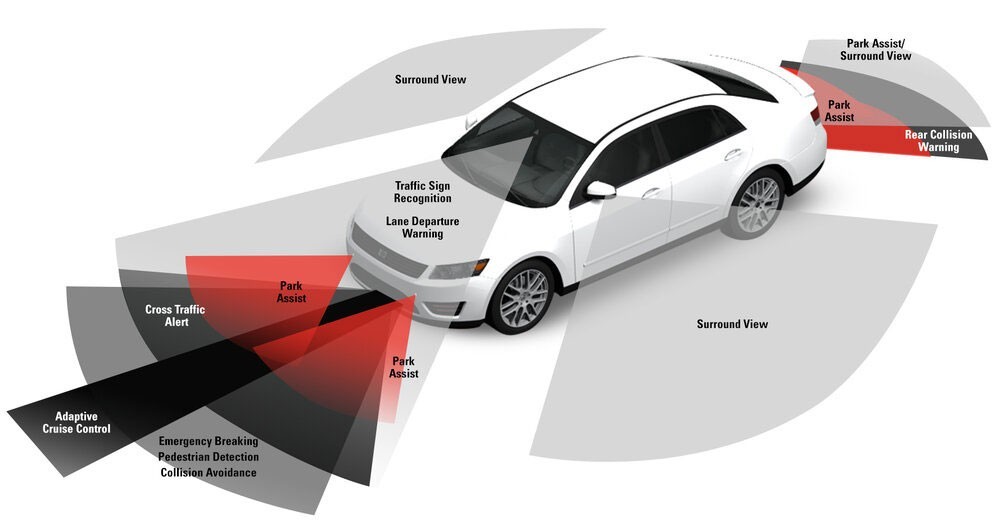

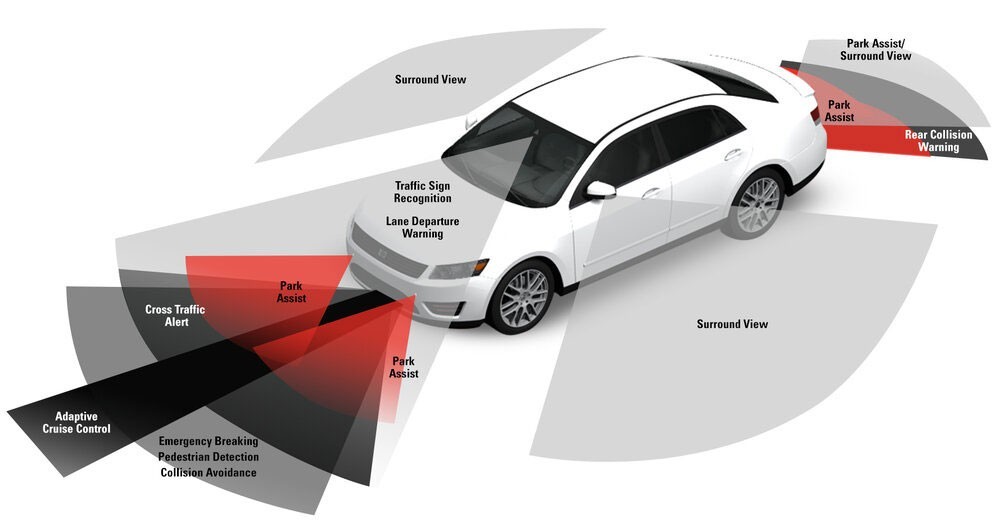

To democratize access to reliable ADAS (Advanced Driver Assistance Systems) by enabling real-time distance estimation using cost-efficient monocular cameras—eliminating reliance on expensive LiDAR/RADAR hardware.

Problem Statement:

LiDAR and RADAR sensors, while accurate, are cost-prohibitive for widespread ADAS adoption. The industry needs a scalable, affordable alternative for Level 3+ autonomy that doesn’t compromise safety.

Vision:

To democratize access to reliable ADAS (Advanced Driver Assistance Systems) by enabling real-time distance estimation using cost-efficient monocular cameras—eliminating reliance on expensive LiDAR/RADAR hardware.

Problem Statement:

LiDAR and RADAR sensors, while accurate, are cost-prohibitive for widespread ADAS adoption. The industry needs a scalable, affordable alternative for Level 3+ autonomy that doesn’t compromise safety.

VIsion & Problem Statement

Vision:

To democratize access to reliable ADAS (Advanced Driver Assistance Systems) by enabling real-time distance estimation using cost-efficient monocular cameras—eliminating reliance on expensive LiDAR/RADAR hardware.

Problem Statement:

LiDAR and RADAR sensors, while accurate, are cost-prohibitive for widespread ADAS adoption. The industry needs a scalable, affordable alternative for Level 3+ autonomy that doesn’t compromise safety.

Product Goal

Product Goal

Develop a lightweight, ML-based vision system that leverages a monocular camera for accurate distance estimation (longitudinal and lateral) between the ego vehicle and nearby objects—enabling affordable ADAS functionality for emerging markets.

Develop a lightweight, ML-based vision system that leverages a monocular camera for accurate distance estimation (longitudinal and lateral) between the ego vehicle and nearby objects—enabling affordable ADAS functionality for emerging markets.

Product Goal

Develop a lightweight, ML-based vision system that leverages a monocular camera for accurate distance estimation (longitudinal and lateral) between the ego vehicle and nearby objects—enabling affordable ADAS functionality for emerging markets.

User Stories

User Stories

Title | As a/an | I want to | So that |

|---|---|---|---|

View Object Distance in Real Time | Driver or test engineer | See accurate object distances displayed on a dashboard | I can make quick, informed decisions in real-time driving conditions |

Evaluate Object Proximity for Research | Researcher validating detection models | Access X and Z coordinates of objects in a structured file | I can compare outputs with ground truth and fine-tune models |

Visualize Traffic from Bird’s Eye View | Visual system designer or ML engineer | See objects rendered in a top-down (BEV) layout | I better understand spatial relationships between vehicles |

Deploy Model on Lightweight Hardware | Embedded systems engineer | Run the model on edge devices like Jetson Nano | I can minimize hardware costs for real-world applications |

Title | As a/an | I want to | So that |

|---|---|---|---|

View Object Distance in Real Time | Driver or test engineer | See accurate object distances displayed on a dashboard | I can make quick, informed decisions in real-time driving conditions |

Evaluate Object Proximity for Research | Researcher validating detection models | Access X and Z coordinates of objects in a structured file | I can compare outputs with ground truth and fine-tune models |

Visualize Traffic from Bird’s Eye View | Visual system designer or ML engineer | See objects rendered in a top-down (BEV) layout | I better understand spatial relationships between vehicles |

Deploy Model on Lightweight Hardware | Embedded systems engineer | Run the model on edge devices like Jetson Nano | I can minimize hardware costs for real-world applications |

User Stories

Title | As a/an | I want to | So that |

|---|---|---|---|

View Object Distance in Real Time | Driver or test engineer | See accurate object distances displayed on a dashboard | I can make quick, informed decisions in real-time driving conditions |

Evaluate Object Proximity for Research | Researcher validating detection models | Access X and Z coordinates of objects in a structured file | I can compare outputs with ground truth and fine-tune models |

Visualize Traffic from Bird’s Eye View | Visual system designer or ML engineer | See objects rendered in a top-down (BEV) layout | I better understand spatial relationships between vehicles |

Deploy Model on Lightweight Hardware | Embedded systems engineer | Run the model on edge devices like Jetson Nano | I can minimize hardware costs for real-world applications |

Core Features

Core Features

Feature | Description | Priority |

|---|---|---|

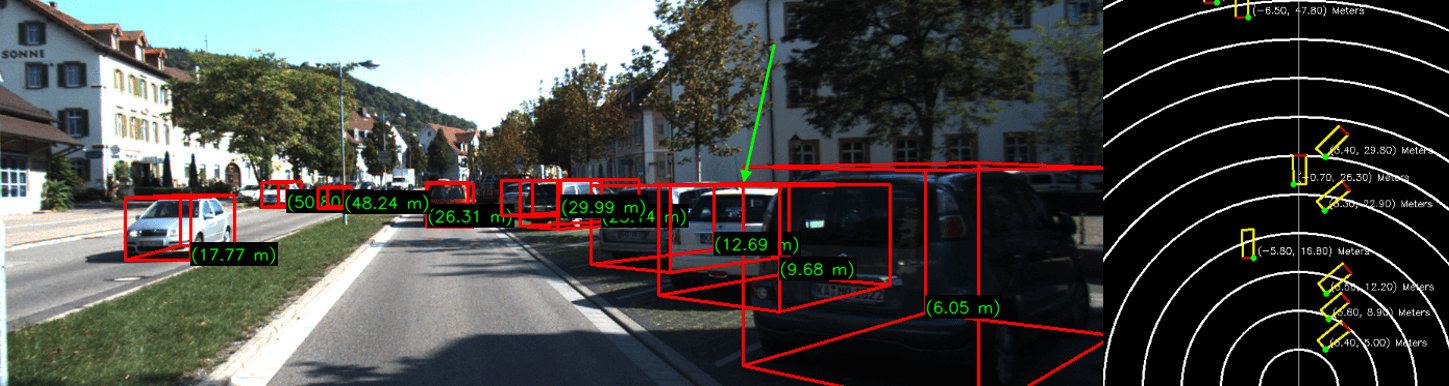

Monocular 3D Object Detection | Uses MonoRCNN + geometric decomposition to estimate real-world distances (X & Z axes) from single images. | P1 |

Bird’s-Eye View Visualization | Projects object locations on a 2D map, aiding spatial awareness for developers and users. | P1 |

Multi-Object Tracking | Simultaneous estimation for vehicles, pedestrians, and cyclists. | P1 |

Real-Time Distance Overlays | Annotates live video feeds with bounding boxes and distance metrics using YOLOv8+Depth estimation. | P2 |

Logging & Evaluation | Generates | P2 |

Feature | Description | Priority |

|---|---|---|

Monocular 3D Object Detection | Uses MonoRCNN + geometric decomposition to estimate real-world distances (X & Z axes) from single images. | P1 |

Bird’s-Eye View Visualization | Projects object locations on a 2D map, aiding spatial awareness for developers and users. | P1 |

Multi-Object Tracking | Simultaneous estimation for vehicles, pedestrians, and cyclists. | P1 |

Real-Time Distance Overlays | Annotates live video feeds with bounding boxes and distance metrics using YOLOv8+Depth estimation. | P2 |

Logging & Evaluation | Generates | P2 |

Core Features

Feature | Description | Priority |

|---|---|---|

Monocular 3D Object Detection | Uses MonoRCNN + geometric decomposition to estimate real-world distances (X & Z axes) from single images. | P1 |

Bird’s-Eye View Visualization | Projects object locations on a 2D map, aiding spatial awareness for developers and users. | P1 |

Multi-Object Tracking | Simultaneous estimation for vehicles, pedestrians, and cyclists. | P1 |

Real-Time Distance Overlays | Annotates live video feeds with bounding boxes and distance metrics using YOLOv8+Depth estimation. | P2 |

Logging & Evaluation | Generates | P2 |

Success Metrics

Success Metrics

Metric | Target |

|---|---|

Short-range MAE | <1.0m |

10% Margin Accuracy | >80% |

Inference Speed | ≥45 FPS |

Hardware Cost Reduction | >$2000/vehicle vs LiDAR |

Metric | Target |

|---|---|

Short-range MAE | <1.0m |

10% Margin Accuracy | >80% |

Inference Speed | ≥45 FPS |

Hardware Cost Reduction | >$2000/vehicle vs LiDAR |

Success Metrics

Metric | Target |

|---|---|

Short-range MAE | <1.0m |

10% Margin Accuracy | >80% |

Inference Speed | ≥45 FPS |

Hardware Cost Reduction | >$2000/vehicle vs LiDAR |

Technical Stack

Technical Stack

Models: MonoRCNN, YOLOv8, Dist-YOLO

Frameworks: PyTorch, OpenCV, Detectron2

Dataset: KITTI Benchmark Suite

Outputs: Annotated visuals, Bird’s Eye View, logs, dist.tx

Models: MonoRCNN, YOLOv8, Dist-YOLO

Frameworks: PyTorch, OpenCV, Detectron2

Dataset: KITTI Benchmark Suite

Outputs: Annotated visuals, Bird’s Eye View, logs, dist.tx

Technical Stack

Models: MonoRCNN, YOLOv8, Dist-YOLO

Frameworks: PyTorch, OpenCV, Detectron2

Dataset: KITTI Benchmark Suite

Outputs: Annotated visuals, Bird’s Eye View, logs, dist.tx

Key Results

Achieved 93.2% accuracy at a 20% error margin across multi-object scenes

Reduced hardware cost by 78% by eliminating the need for LiDAR/RADAR

Enhanced short-range prediction accuracy (≤30m) to under 1m MAE

Delivered within an 8-week sprint despite infrastructure and GPU access constraints

Constraints, Risks, and Mitigations

Issue / Constraint | Type | Mitigation / Notes |

|---|---|---|

No use of stereo or depth cameras | Constraint | Focuses on monocular input for cost-effectiveness; future versions may integrate fusion |

Reduced accuracy in extreme weather (fog, snow, glare) | Risk | Integrate thermal or radar data in future versions to enhance robustness |

Limited generalization beyond KITTI dataset | Risk | Validate on other datasets (nuScenes, CADC); use domain adaptation techniques |

Accuracy decreases for objects >35m away | Constraint | Acknowledge as a current limit of monocular depth estimation; flag distant objects |

Potential slow inference on edge devices | Risk | Model optimization (quantization, pruning) and hardware acceleration |

Business Impact

$2B cost savings potential for automakers at scale

Opened pathways for TCS to collaborate with Tier 1 auto OEMs

Positioned as an R&D differentiator in applied AI for automotive use cases

Future Roadmap

Short-Term

Deploy on edge device (Jetson Nano)

Integrate real-world dashcam input

Mid-Term

Fuse with stereo/thermal/RADAR data

Train on diverse weather datasets (e.g., nuScenes, CADC)

Long-Term

Partner with OEMs for on-road trials

Certify for Level 2–3 ADAS use cases

Key Results

Achieved 93.2% accuracy at a 20% error margin across multi-object scenes

Reduced hardware cost by 78% by eliminating the need for LiDAR/RADAR

Enhanced short-range prediction accuracy (≤30m) to under 1m MAE

Delivered within an 8-week sprint despite infrastructure and GPU access constraints

Constraints, Risks, and Mitigations

Issue / Constraint | Type | Mitigation / Notes |

|---|---|---|

No use of stereo or depth cameras | Constraint | Focuses on monocular input for cost-effectiveness; future versions may integrate fusion |

Reduced accuracy in extreme weather (fog, snow, glare) | Risk | Integrate thermal or radar data in future versions to enhance robustness |

Limited generalization beyond KITTI dataset | Risk | Validate on other datasets (nuScenes, CADC); use domain adaptation techniques |

Accuracy decreases for objects >35m away | Constraint | Acknowledge as a current limit of monocular depth estimation; flag distant objects |

Potential slow inference on edge devices | Risk | Model optimization (quantization, pruning) and hardware acceleration |

Business Impact

$2B cost savings potential for automakers at scale

Opened pathways for TCS to collaborate with Tier 1 auto OEMs

Positioned as an R&D differentiator in applied AI for automotive use cases

Future Roadmap

Short-Term

Deploy on edge device (Jetson Nano)

Integrate real-world dashcam input

Mid-Term

Fuse with stereo/thermal/RADAR data

Train on diverse weather datasets (e.g., nuScenes, CADC)

Long-Term

Partner with OEMs for on-road trials

Certify for Level 2–3 ADAS use cases

Key Results

Achieved 93.2% accuracy at a 20% error margin across multi-object scenes

Reduced hardware cost by 78% by eliminating the need for LiDAR/RADAR

Enhanced short-range prediction accuracy (≤30m) to under 1m MAE

Delivered within an 8-week sprint despite infrastructure and GPU access constraints

Constraints, Risks, and Mitigations

Issue / Constraint | Type | Mitigation / Notes |

|---|---|---|

No use of stereo or depth cameras | Constraint | Focuses on monocular input for cost-effectiveness; future versions may integrate fusion |

Reduced accuracy in extreme weather (fog, snow, glare) | Risk | Integrate thermal or radar data in future versions to enhance robustness |

Limited generalization beyond KITTI dataset | Risk | Validate on other datasets (nuScenes, CADC); use domain adaptation techniques |

Accuracy decreases for objects >35m away | Constraint | Acknowledge as a current limit of monocular depth estimation; flag distant objects |

Potential slow inference on edge devices | Risk | Model optimization (quantization, pruning) and hardware acceleration |

Business Impact

$2B cost savings potential for automakers at scale

Opened pathways for TCS to collaborate with Tier 1 auto OEMs

Positioned as an R&D differentiator in applied AI for automotive use cases

Future Roadmap

Short-Term

Deploy on edge device (Jetson Nano)

Integrate real-world dashcam input

Mid-Term

Fuse with stereo/thermal/RADAR data

Train on diverse weather datasets (e.g., nuScenes, CADC)

Long-Term

Partner with OEMs for on-road trials

Certify for Level 2–3 ADAS use cases

More Works More Works

More Works More Works

CMU - A DIstributed App

APP DEV + REST APIS + CLOUD ANALYTICS

2024

2024

CMU - A DIstributed App

APP DEV + REST APIS + CLOUD ANALYTICS

2024

2024

CMU - A DIstributed App

APP DEV + REST APIS + CLOUD ANALYTICS

2024

2024

CMU - A DIstributed App

APP DEV + REST APIS + CLOUD ANALYTICS

2024

2024

CMU - AI FORGE

STRATEGY + GAME DEV + AI NPCS

2024

2024

CMU - AI FORGE

STRATEGY + GAME DEV + AI NPCS

2024

2024

CMU - AI FORGE

STRATEGY + GAME DEV + AI NPCS

2024

2024

CMU - AI FORGE

STRATEGY + GAME DEV + AI NPCS

2024

2024